Software Workshop

The Software Workshop is a unique Central Scientific Facility that brings together scientists and software engineers to translate basic research into robust and standardized software systems.

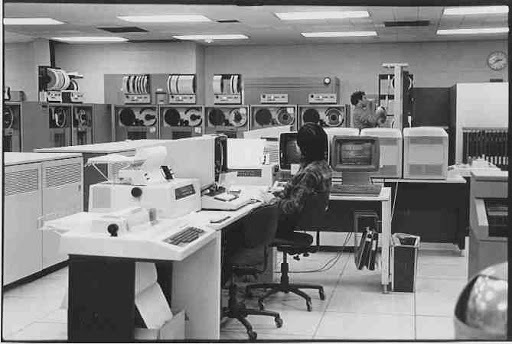

The Columbia Computer Center IBM Machine Room, about 1980. Photo: Bob Resnikoff.

Our goal is to increase the impact of the research made at the Max Planck Institute for Intelligent Systems by

- creating innovative software solutions for scientific purposes, always following industry standards

- improving existing codes written by researchers (port to a new framework, optimization, parallelization...)

- disseminating expertise and promoting good practices in software development (wiki, reviews, trainings)

- assisting scientists to design, deploy, and maintain their software

- providing scientists with a state-of-the-art infrastructure for software development

- developing tools that improve the workflow of scientists

Fields of expertise

- Programming languages: C++, Python, CMake, C#, Matlab, LaTeX, CUDA, bash, powershell

- Technologies: git, django, celery, Qt, PyQt, REST API, docker, Ansible, Kinect2, OpenCV, Caffe2, pytorch, iOS

- Tools: Confluence, Jira, Bamboo, Crucible, GitLab, download center

- Methodologies: Agile software development, sprints, daily scrums

Members:

| Tübingen |

| Tübingen |

| Tübingen |

Alumni:

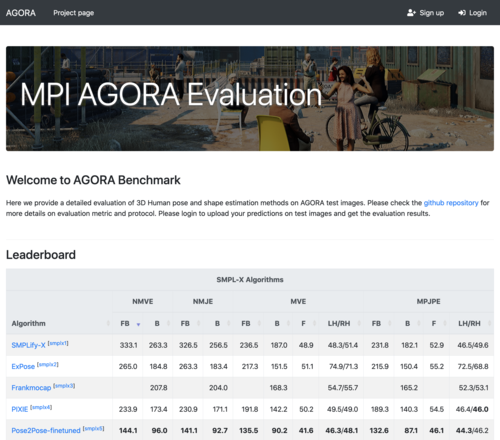

Evaluation servers

2023-12-14

Web servers to rank and compare human pose estimation algorithms.

python django docker ansible

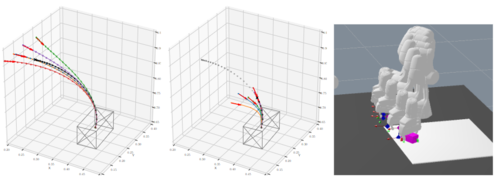

LQR Teleoperation

2023-06-22

Shared control framework combining human and robot agents.

python c++ cython cmake

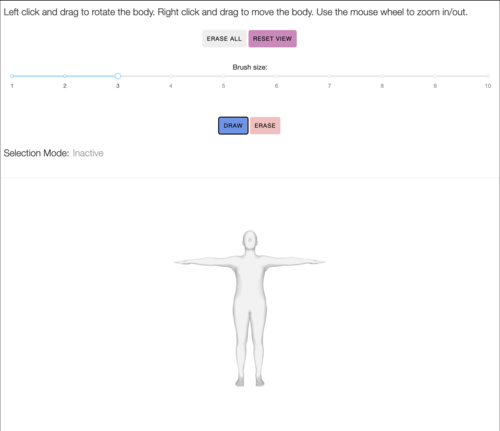

Mesh Annotator

2023-02-21

Contact labeling application for selecting body regions / mesh vertices on the Mechanical Turk.

python dash javascript docker ansible

Coffee tracker

2022-11-11

An application for tracking consumption across several "coffee rooms" and facilitating payments.

python django celery

Mechanical Turk Manager

2022-04-01

Web application for interfacing with the Amazon Mechanical Turk.

python django ansible mechanical turk

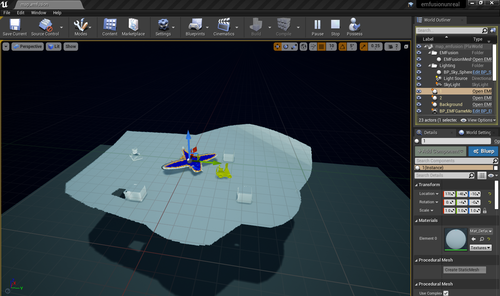

EMFusion++

2022-02-25

A VR demonstrator for an in-house SLAM algorithm integrated with commercial VR hardware (headset + camera).

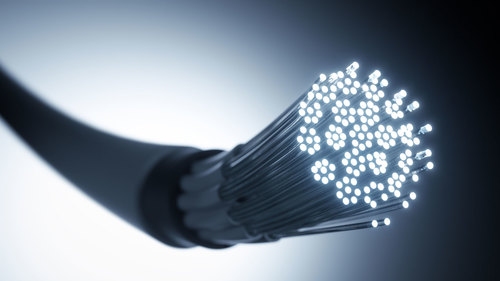

Fiber-based shape sensor

2021-09-29

Grassroots initiative into developing a novel shape probe made out of a multimode optical fiber.

Multitensor

2021-01-29

Library for multilayer network tensor factorization

https://github.com/MPI-IS/multitensor

python c++ cython cmake

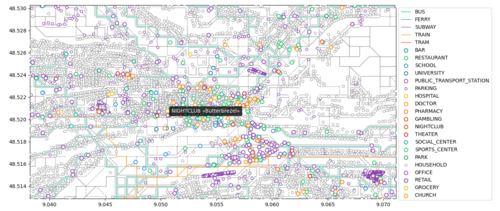

CityGraph

2020-05-21

A Python framework for representing a city (real or virtual) and moving around it.

https://github.com/MPI-IS/CityGraph

python openstreetmap

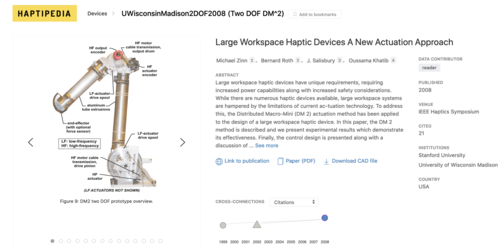

Haptipedia

2020-04-06

An online, open-source, visualization of a growing database of haptic devices invented since 1992.

python django javascript

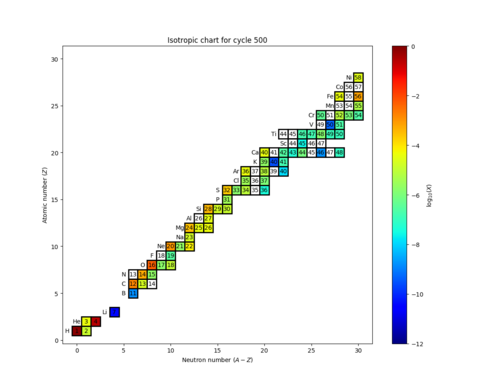

NuGridPy

2019-12-27

Python framework for analyzing data produced by stellar astrophysical codes.

https://github.com/NuGrid/NuGridPy

python hdf5

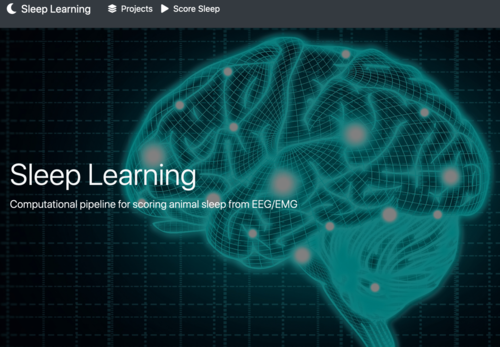

Sleep Learning

2019-09-04

An application for collecting, organizing, and post-processing EEG and EMG data from animal sleep.

python django celery dash post-processing

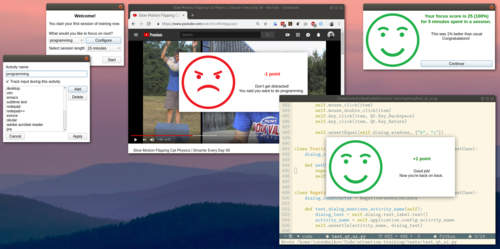

Attention Training

2019-06-17

A productivity application for tracking and training the ability of the user to focus on a chosen task.

python pyqt multi-threading

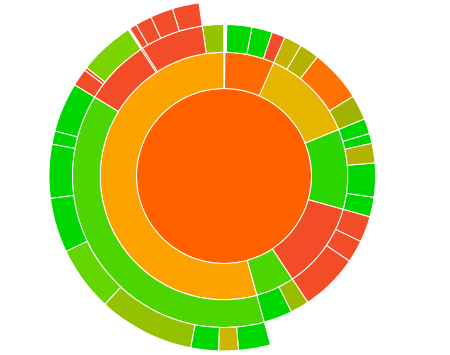

Code Cov

2019-05-08

A tool to analyze the coverage of python code by extracting and visualizing using an interactive web interface.

python dash

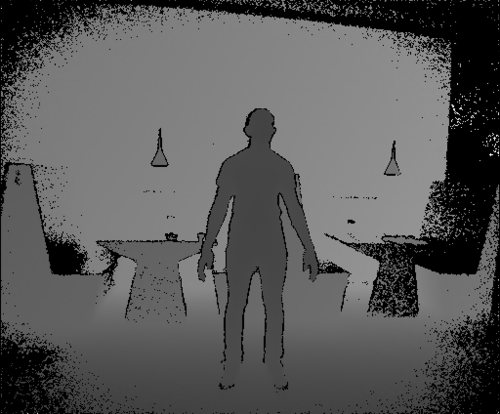

Monocle

2019-02-24

Application used for capturing from the Kinect 2.0 device.

https://github.com/MPI-IS/monocle

c# kinect sdk makefile

Robot Data Collector

2019-01-25

A web application for managing, organizing, and sharing robotic data.

python django celery rest api

Mesh Library

2018-08-17

When working in 3D graphics, one needs to load raw data, conduct various processing on it, visualize the results to help understanding, then save the output in different kinds of formats. Here we release the Mesh Library to facilitate all these aforementioned operations. This library is built on top of OpenGL and CGAL, with an easy-to-use Python interface. Other than the basic usages like data IO and interactive visualization, it also supports other more complex functionalities like texture rendering, visibility computation, and geometry arithmetic. We hope the release of this tool makes the entry to 3D world smoother for interested people.

https://github.com/MPI-IS/mesh

mesh library 3d graphics

Accelerated K-means

2018-06-15

An efficient and generic implementation of the k-means clustering algoriothm.

c++ boost cmake cython python matlab

OpenPhd Guiding

2017-08-01

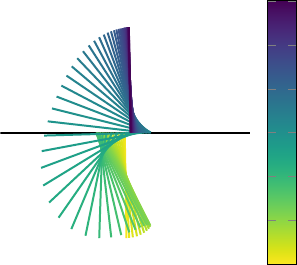

Algorithm using a learned motion correction based on a Gaussian Process to produce better images from a telescope.

c++ boost cmake

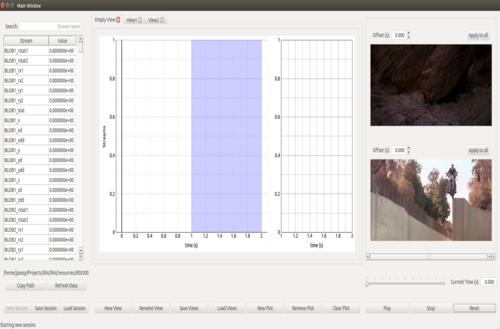

Robot Analysis Infrastructure

2017-06-30

An application for analyzing simultaneously videos and sensors data from robotic experiments.

python pyqt opencv

Scan Manager

2017-04-07

A web application for being able to manage the increasing amount of body scans.

python django celery rabbitmq

Distributed brain-computer interface

2017-02-20

A brain-computer interface (BCI) to assist and interpret thoughts from patients suffering diseases such as amyotrophic lateral sclerosis. This monitoring tool is especially suited for research and for reaching patients living in remote locations.

https://is.mpg.de/person/mhohmann

c++ qt mqtt

Livius

2015-07-13

The tool we developed for generating the videos on MLSS 2015

python batch processing video ffmpeg

Code Doc

2015-04-01

A Django application for hosting and accessing documentation and distributions generated from source code.

python django documentation continuous-integration reat api

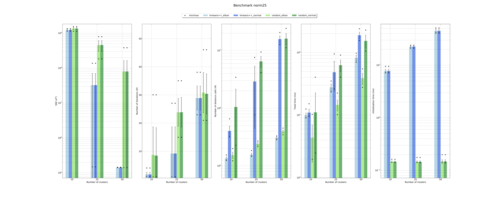

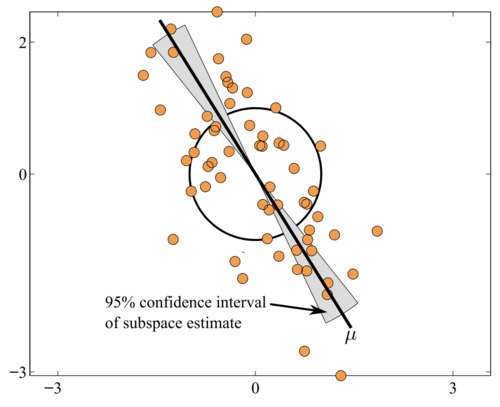

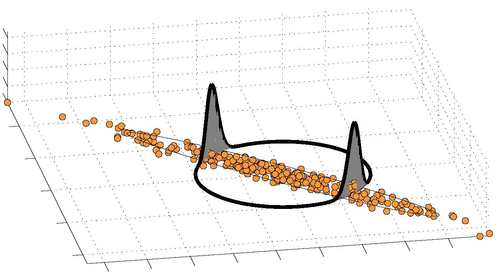

Robust and scalable PCA using Grassmann averages

2014-06-01

The Grassmann Averages PCA is a method for extracting the principal components from a sets of vectors, with the nice following properties: 1) it is of linear complexity wrt. the dimension of the vectors and the size of the data, which makes the method highly scalable, 2) It is more robust to outliers than PCA in the sense that it minimizes an L1 norm instead of the L2 norm of the standard PCA. It comes with two variants: 1) the standard computation, that coincides with the PCA for normally distributed data, also referred to as the GA, 2) a trimmed variant, that is more robust to outliers, referred to the TGA. We provide implementations for the Grassmann Average, the Trimmed Grassmann Average, and the Grassmann Median. The simplest is the Matlab implementation used in the CVPR 2014 paper, but we also provide a faster C++ implementation, which can be used either directly from C++ or through a Matlab wrapper interface. The repository contains the following:

- a C++ multi-threaded implementation of the GA and TGA

- a C++ multi-threaded implementation of the EM-PCA (for comparisons)

- binaries that computes the GA, TGA and EM-PCA on a set of images (frames of a video)

- Matlab bindings

- Documentation of the C++ API

2023

Glare Removal for Astronomical Images with High Local Dynamic Range

Bastelaer, M., Kremer, H., Volchkov, V., Passy, J., Schölkopf, B.

Glare Removal for Astronomical Images with High Local Dynamic Range, pages: 1-11, 2023 (conference)

Augmenting Human Policies using Riemannian Metrics for Human-Robot Shared Control

Oh, Y., Passy, J., Mainprice, J.

In Proceedings of the IEEE International Symposium on Robot and Human Interactive Communication (RO-MAN), pages: 1612-1618, Busan, South Korea, August 2023 (inproceedings)

DECO: Dense Estimation of 3D Human-Scene Contact in the Wild

Tripathi, S., Chatterjee, A., Passy, J., Yi, H., Tzionas, D., Black, M. J.

In Proc. International Conference on Computer Vision (ICCV), International Conference on Computer Vision 2023, October 2023 (inproceedings) Accepted

Resonant Kushi-comb-like multi-frequency radiation of oscillating two-color soliton molecules

Melchert, O., Willms, S., Oreshnikov, I., Yulin, A., Morgner, U., Babushkin, I., Demircan, A.

New Journal of Physics, 25(1), 2023 (article)

2022

Heteronuclear soliton molecules with two frequencies

Willms, S., Melchert, O., Bose, S., Yulin, A., Oreshnikov, I., Morgner, U., Babushkin, I., Demircan, A.

Physical Review A, 105, 2022 (article)

Cherenkov radiation and scattering of external dispersive waves by two-color solitons

Oreshnikov, I., Melchert, O., Willms, S., Bose, S., Babushkin, I., Demircan, A., Morgner, U., Yulin, A.

Physical Review A, 106(5):53514, 2022 (article)

Can we improve self-regulation during computer-based work with optimal feedback?

Wirzberger, M., Lado, A., Prentice, M., Oreshnikov, I., Passy, J., Stock, A., Lieder, F.

Behaviour & Information Technology, November 2022 (article) Submitted

2021

A pupillary index of susceptibility to decision biases

Eldar, E., Felso, V., Cohen, J. D., Niv, Y.

Nature Human Behaviour, 5(5):653-662, 2021 (article)

The o80 C++ templated toolbox: Designing customized Python APIs for synchronizing realtime processes

Berenz, V., Naveau, M., Widmaier, F., Wüthrich, M., Passy, J., Guist, S., Büchler, D.

Journal of Open Source Software, 6(66):Article no. 2752, October 2021 (article)

2020

How to navigate everyday distractions: Leveraging optimal feedback to train attention control

Wirzberger, M., Lado, A., Eckerstorfer, L., Oreshnikov, I., Passy, J., Stock, A., Shenhav, A., Lieder, F.

Proceedings of the 42nd Annual Meeting of the Cognitive Science Society, Cognitive Science Society, July 2020 (conference)

ACTrain: Ein KI-basiertes Aufmerksamkeitstraining für die Wissensarbeit

Wirzberger, M., Oreshnikov, I., Passy, J., Lado, A., Shenhav, A., Lieder, F.

66th Spring Conference of the German Ergonomics Society, 2020 (conference)

2018

The effect of binding energy and resolution in simulations of the common envelope binary interaction

Iaconi, R., De Marco, O., Passy, J., Staff, J.

Monthly Notices of the Royal Astronomical Society, 477, June 2018 (article)

2017

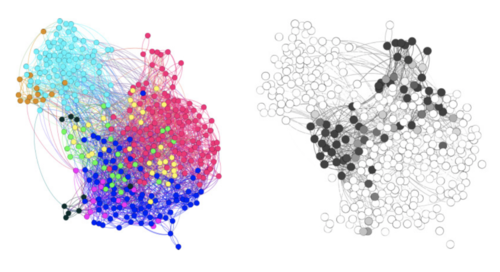

Design of a visualization scheme for functional connectivity data of Human Brain

The effect of a wider initial separation on common envelope binary interaction simulations

Iaconi, R., Reichardt, T., Staff, J., De Marco, O., Passy, J., Price, D., Wurster, J., Herwig, F.

Monthly Notices of the Royal Astronomical Society, 464, pages: 4028-4044, 2017 (article)

Common Envelope Light Curves. I. Grid-code Module Calibration

Galaviz, P., De Marco, O., Passy, J., Staff, J., Iaconi, R.

Astrophysical Journal, Supplement, 229, pages: 36, 2017 (article)

Nonparametric Disturbance Correction and Nonlinear Dual Control

2015

Scalable Robust Principal Component Analysis using Grassmann Averages

Hauberg, S., Feragen, A., Enficiaud, R., Black, M.

IEEE Trans. Pattern Analysis and Machine Intelligence (PAMI), December 2015 (article)