The Numerics of GANs

2017

Conference Paper

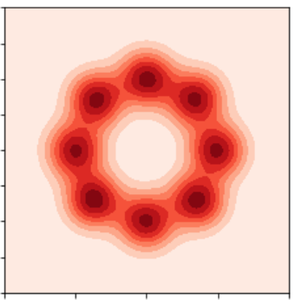

avg

In this paper, we analyze the numerics of common algorithms for training Generative Adversarial Networks (GANs). Using the formalism of smooth two-player games we analyze the associated gradient vector field of GAN training objectives. Our findings suggest that the convergence of current algorithms suffers due to two factors: i) presence of eigenvalues of the Jacobian of the gradient vector field with zero real-part, and ii) eigenvalues with big imaginary part. Using these findings, we design a new algorithm that overcomes some of these limitations and has better convergence properties. Experimentally, we demonstrate its superiority on training common GAN architectures and show convergence on GAN architectures that are known to be notoriously hard to train.

| Author(s): | Lars Mescheder and Sebastian Nowozin and Andreas Geiger |

| Book Title: | Proceedings from the conference "Neural Information Processing Systems 2017. |

| Year: | 2017 |

| Month: | December |

| Day: | 4-9 |

| Editors: | Guyon I. and Luxburg U.v. and Bengio S. and Wallach H. and Fergus R. and Vishwanathan S. and Garnett R. |

| Publisher: | Curran Associates, Inc. |

| Department(s): | Autonomous Vision |

| Research Project(s): |

Convergence and Stability of GAN training

|

| Bibtex Type: | Conference Paper (inproceedings) |

| Event Name: | Advances in Neural Information Processing Systems 30 (NIPS 2017) |

| Event Place: | Long Beach, CA, USA |

| Links: |

pdf

|

|

BibTex @inproceedings{Mescheder2017NIPS,

title = {The Numerics of GANs },

author = {Mescheder, Lars and Nowozin, Sebastian and Geiger, Andreas},

booktitle = {Proceedings from the conference "Neural Information Processing Systems 2017.},

editors = {Guyon I. and Luxburg U.v. and Bengio S. and Wallach H. and Fergus R. and Vishwanathan S. and Garnett R.},

publisher = {Curran Associates, Inc.},

month = dec,

year = {2017},

doi = {},

month_numeric = {12}

}

|

|